Sigmoids are useful in this context because we don’t need a deep network to learn digit identification, and because they’re well known. Since a sigmoid function outputs a value between 0 and 1, we cannot intuitively get a ‘7’ without also using some kind of linear function in the output layer. The advantage of doing it this way is that we can use sigmoid activation functions for our neurons. If our digit is a 7, we then “activate” the 8th output neuron (since the first output neuron corresponds to 0). Our first element is zero, and every element afterwards represents a digit from 1 to 9. This let’s us represent, say, the number 7 as follows: It’s also useful to turn our data labels into column vectors as well. For m examples, we have an n× m array, where n is the number of input neurons, and m is the number of examples in our dataset. The reason for this is that our network takes in a column vector as input, since this is intuitively how most neural network diagrams are drawn.

% put data into the shape we want it (each example is a column vector)ĬLASSIFICATION = zeros(10,size(LABEL,2)) To make this data usable for our network, we need to put it into the format our network needs:

Gnu octave deep learning plus#

Why 785? Well, 785 is (the size of an image that is 28 pixels wide, and 28 pixels high) plus a single column for the training data label. In this case, the training data comes in an m×785 array, where m is the number of test cases.

Gnu octave deep learning code#

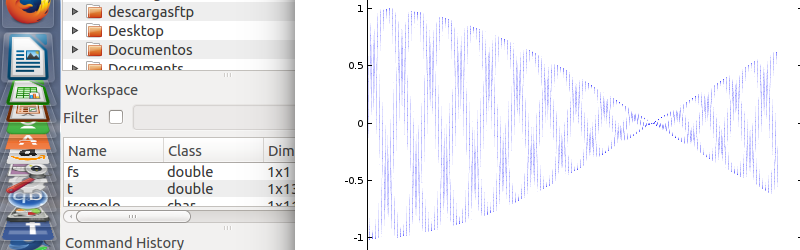

Title(num2str(tr(i, 1))) % show the labelĬredit where credit is due – this piece of code is actually from Mathworks, and is useful for visualizing the data to make sure the representation is correct. The next thing this code does is read both the test and training data from the csv files.ĭigit = reshape(tr(i, 2:end), )' % row = 28 x 28 image This means that we can access those functions without having to copy the files directly over to our working directory. Sub = csvread('test.csv', 1, 0) % read test.csvįirst off, I’m adding the path to the neural network functions to our current load path. Tr = csvread('train.csv', 1, 0) % read train.csv In addition to this, some of the work has already been done for us thanks to the good folks at Mathworks.Īddpath(genpath('/home/seanny/Dropbox/Documents/Octave Scripts/blaze')) It seems like a lot, but most of this is fairly routine data manipulation. Visualize the test set along with the network’s prediction.Use the trained network to classify the test data set.Train the network using the training data and converted labels.Convert labels to an output form that is useful to us (in this case, zeros and ones in a 10 label set).Visualize the data so that we can verify it.

Gnu octave deep learning series#

This time I’m going to be using the same network to classify a series of handwritten digits, the source of which you can find here. So this is a quick follow-up to my previous post, where I went through a neural network implementation for Octave.